Black Mirror is one of television’s most thought provoking drama series. With its dystopian view of the future, it magnifies concerns about the advent of new technology. Even with its sci-fi stories, the show remains grounded enough that the show’s themes can not be dismissed.

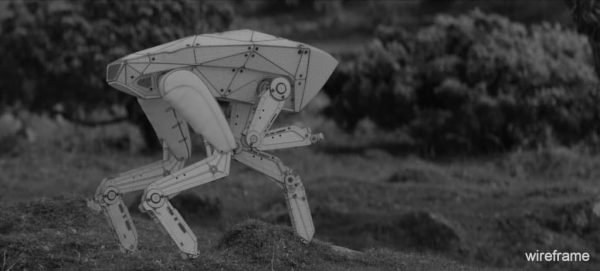

Last session was no exception. Written by showrunner Charlie Brooker and directed by David Slade, the fifth episode of season four, “Metalhead” took the viewer to a world after the collapse of normal society where robots rule. In the episode, Bella (Maxine Peake) attempts to flee from a robotic “dog”. The piece visually referenced the robotic tests from companies such as Boston Dynamics and Festo Robotics, in what was one of the bleakest (and best) stories from last season.

The episode was shot entirely in black and white on RED EPIC monochrome cameras. As such ‘Metalhead’ delivers a stark visual style that matches the bleak future it portrays. The visual effects were by Double Negative (DNEG) in London.

For their work, the DNEG team took home the BAFTA Craft Award in the ‘Special, Visual and Graphic Effects’ category at April’s ceremony in London. This is the fifth award that DNEG has won in the last few months, having previously been honoured with an Oscar and a BAFTA for its work on Blade Runner 2049 and two VES awards for Blade Runner 2049 and Christopher Nolan’s Dunkirk.

DNEG TV’s team created a photo-realistic quadruped robot, built from technology that could conceivably exist in the near future. The team created over 300 shots for the episode, including 200 shots involving bespoke character animation. Starting with a concept from series designer Joel Collins, DNEG TV took the ‘dog’ design further by trying to understand how such a robot would actually work.

To ground the mechanical systems of the robot in reality the team looked at cutting edge research from pioneering robotics manufacturers around the world before extrapolating the results to create a plausible near-future concept. The design had to feel utilitarian. The team were careful not to add superfluous details; every component had a specific purpose, from shock absorbers and internal cooling fans to the ‘LIDAR scanning equipment’ inside the visor. The final model ended up comprising over 1,500 separate parts.

We sat down with Mike Bell, DNEG VFX Supervisor, to discuss the project.

fxphd: Was it always the plan to shoot in monochrome, did you consider grading it to monochrome in post?

Mike Bell: It was director David Slade’s idea to shoot the episode in black & white. Although it would have been easier from our perspective to shoot in colour and do a post grade, David did some camera tests before the shoot with the monochrome and colour sensor and the non-graded monochrome footage looked better, so we came up with a plan to make it work as we hadn’t needed to work with black & white footage before.

fxphd: I believe the native ISO of the Monochrome’s default meta settings is ISO2000?

Mike Bell: Yes that’s correct which obviously means you can shoot in much lower light. It also had a lot less grain/noise which was also a look David was keen on.

fxphd: And I assume you got the 5K for 4K master?

Mike Bell: Yes, we received the full resolution and used that for tracking/matchmove then we worked and delivered to 4K.

fxphd: It has been reported that there was green screen on set? But shooting in black and white this makes no sense unless there was some non Monochrome Red cameras used for those shots?

Mike Bell: No, there was no greenscreen shoot. This was a question I had for David (Slade) before the shoot started as the script had quite a lot of driving interiors. He very quickly told me he wasn’t a fan of greenscreen anyway and intended to shoot those for real, which he did.

fxphd: I understand you had GoPros on the RED? Why was that? Was it just for tracking?

Mike Bell: That was due to the fact that working with the black & white footage was a bit of an unknown, so we came up with a plan that gave us the best chance in post. There were a couple of reasons for using GoPros to record everything the Red camera was shooting. Firstly, it was for tracking purposes. At that point we honestly didn’t know how our tracking & Matchmove software would react to the black & white footage and because we had very little time to test it we decided to use the GoPros as an insurance policy.

David was also very keen to shoot mostly on long lenses with a very shallow depth of field so a lot of the plates that we would be adding the “Dog” into would just be a fully defocused frame. The GoPros also helped with that, acting as witness cameras. In the end it was actually quite easy to track because of the lack of grain/noise in the plate, and also because David shot the episode with a very narrow shutter angle so there was hardly any motion blur.

Another reason why the GoPros were useful was for colour reference. Even though the end product was going to be in black & white we thought it would be very useful to have colour reference for lighting the CG.

fxphd: Was there a physical prop used for alignment or any non hero shots?

Mike Bell: Yes, we had a model built for shooting so the cameras could frame up and get the focus right. Then we would remove it just before the take.

fxphd: What was Bella (Maxine Peake) reacting to or acting across from on Set?

Mike Bell: We always framed up with the stand-in model and if the “Dog” was off screen it was left there for Maxine to react to. In fact even in the shots were the “Dog” is walking/running we had a stand-in model, on a wheel, pushed along by a puppeteer! These takes were actually used in the first cut which as you can imagine was hilarious!! And not at all the mood the episode was looking for.

fxphd: Could we ask about the pipeline please? What were the robots modeled in and especially what where they rendered in?

Mike Bell: The “Dog” was modeled in Maya and rendered in Clarisse.

fxphd: How key was texturing of the robots to make them feel real?

Mike Bell: It was hugely important!! We knew we were going to be getting very close to the robot so the texturing and look development stage was as important as the modelling itself!

We had 4 different stages:

- The original state was as we find it at the start of the episode. It was supposed to look like it had been through the wars a bit but not too damaged. So certainly some damage, paint scratched off revealing metal, that kind of thing.

- The second stage was after the car goes over the cliff so a few big dents, more scratches and a cracked visor.

- The third was once the paint had been thrown and

- the final is at the end when it’s been shot revealing what’s under the visor.

The software used for texturing was Mari.

fxphd: Is it true Real LIDAR scans were used to create the scenes shown from the dog’s perspective? If so how did you treat them?

Mike Bell: Yes, we LIDAR’d absolutely everything! Not just for the robot POVs but also for the many different terrains that it would be walking on. For the POVs we used the scanned static LIDAR geometry as a starting point. We then scattered a point cloud at 3 different densities so that our compositors could create a scanning effect which looked like the environment was constantly building up and refining in detail. For the moving POVs such as when the “Dog” looks up at Maxine in the tree we used an Xbox Kinect which gave us a real-time moving point cloud. We shot those separately and added them to the static LIDAR scans.

fxphd: How procedural was the animation of the walk cycles over the ground and how much did the legs have to all be key framed?

Mike Bell: The project had a very quick turnaround and a lot of animation so we knew we needed ways to speed that process up. Our animation team developed generic walk and run cycles begin with. We also had an in-house animation path tool that one of our animators, Iestyn Roberts developed further for what we needed on Metalhead. It meant that we could draw a path along the LIDAR scanned terrain and the robot would follow that path from A to B. It’s feet would stick to the ground and it would move over lumps and bumps, rocks, etc. This gave us a great starting point and meant we didn’t waste any time at the early animation blocking stage as we could get quite far quite quickly. Our animators could then go in, clean it up and add all the shot to shot bespoke animation layers that really made it work.

fxphd: Where there other key challenges for you on this project?

Mike Bell: There were many challenges to overcome on this project. One of the main ones that I think our animators pulled off brilliantly was to make sure the robot moved mechanically and not like a living, thinking animal. It had to look like it didn’t have a natural desire to kill, but that it was programmed to do so. Our robot was modelled as if it was a real working object so it had all the correct mechanisms, pistons and hydraulic cables to move as it would in the real world. So our animators also had to think in the same way. If our model couldn’t feasibly move it’s limb to climb over something then our animators had to think of a clever way round that could really happen. Hence the moment when it slips on some rocks.

We studied hours of videos from sources such as Boston Dynamics & Festo Robotics and when you looked at the way those robots moved it sometimes looked like bad animation! There we little pops & twitches that were so unnatural you wouldn’t normally put them in but in the end it was those moments that really made it work and made it creepy. As I said earlier the first cut featuring the puppeteered was funny, so we knew from the start what we were up against. We knew we were treading a fine line between terrifying and comical and thankfully in the end I think we pulled off terrifying!

Overall it was a really exciting project to work on. It’s quite rare to work on such a unique show and get to do such original and unique work! I’m incredibly proud of what we achieved! and to be part of such an amazing team of artists and production crew at DNEG!