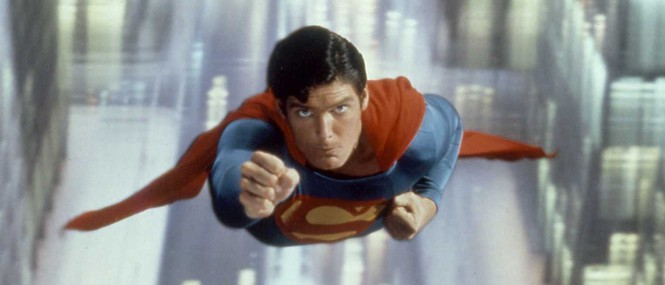

With Man of Steel having been released this year and with fxphd’s new History of VFX course, we thought we’d go back in time and look at the pioneering visual effects technology used on the Christopher Reeve Superman films – the Zoptic front-projection system. We talk to the man behind the system, Zoran Perisic, who was a joint winner of an Academy Award for Superman’s visual effects in 1978 and the recipient of an Academy Scientific and Engineering Award and a Technical Achievement Award.

fxg: Let’s go back to the beginning – what led to you developing the first Zoptic system?

Perisic: We had a lot of challenges on Stanley Kubrick’s 2001- A Space Odyssey with spacecraft and rockets flying against star backgrounds; I felt that there had to be a more efficient way other than rotoscoping and hand painted mattes. Later, while working at Yorkshire TV in England, I was experimenting with slit-scan, using back-projected live action images instead of back-lit moiré patterns as we had done on 2001 – A Space Odyssey. The results were interesting but with limited practical use. I wondered if I could use a thin strip of front-projection material in place of a regular clear slit; I could change the shape of the slit for each frame and so animate the slit-scan distortion effect. This would require mounting a small projector in front of the camera on the slit-scan machine I had built (and installed in our spare bedroom much to the annoyance of my wife).

However if I were to front-project a single frame onto a slit as it “scanned” across the full image the result would be an exact copy of the projected image even if the camera/projector unit was tracking in towards it (the projected image gets smaller but so does the image area seen by the camera lens!).

Needless to say I abandoned that experiment but was intrigued by the idea. Then a thought struck me – what if I were to use the same camera-projector unit to track towards a regular front projection screen while front-projecting a full moving image at 24 fps? (Auto focus and projector iris compensation would, of course, be required but that was all do-able). The result would be a perfect one-to-one copy of the projected image but an object placed in front of the f.p. screen would appear to get nearer as the camera unit tracked towards it resulting in an apparent movement in depth. Bingo!

That in essence was the genesis of Zoptic front-projection system.

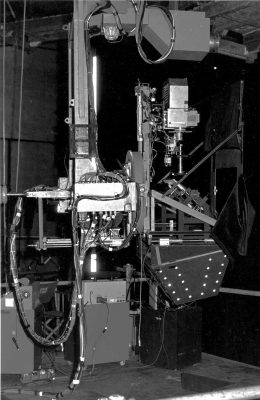

The next step was to make a compact camera/projector package that was manoeuvrable so that the “object” placed in front of the front-projection screen could be made to appear to move in any direction within the frame as well as towards or away from the camera while in fact it remained stationary.

Using synchronized zoom lenses on the projector and camera enabled the system to create much subtler and faster maneuvers…and then came a super hero character whose costume featured a blue suit and a red cape – the very colors that make it virtually impossible to derive a good travelling matte using the color difference process (commonly referred to as “blue or green screen” process.) Front-projection was the best option.

fxg: What were the challenges that front projection was perhaps not meeting before then?

Perisic: Front-projection was used at first as an alternative to back-projection for live action compositing because it was inherently more efficient. It used the same pin-registered projector with the addition of a beam-splitter mirror in front of the projection lens and a camera support (in much the same way as the first digital scanners consisted of a standard optical printer with a digital camera mounted in place of the film camera – a good example of evolution). Specially designed front-projection units came later equipped with nodal heads enabling the camera to pan and tilt across the composite image and even pan off the beam splitter onto an extended foreground set. It was still essentially a fixed set up just as back-projection but it required much less stage space.

I first came in contact with front-projection on 2001 – A Space Odyssey during the shooting of the ‘Dawn of Man’ sequence. The equipment was devised by Tommy Howard and used to project large format stills taken in Africa. It was an unwieldy, cumbersome contraption but the results were incredible. (The set was built on a rotating stage to facilitate changes of angle, with a large front-projection screen behind it.)

fxg: Can you talk about the camera, projector, lighting, lens, rigging equipment that made up Zoptic? How did this change over time?

Perisic: The bulk of a typical projector designed for back-projection, and later adapted for front projection, consisted of a massive lamp-house. Since the front-projection method required much less light the bulky lamp-house was replaced by a very much smaller unit.

There are two schools of thought on the best way to achieve the maximum brightness from a projector. One is to throw as much light into the film gate and hope that most of it goes through the film plate and is picked up by the lens and ends up as an image on the screen. The alternative approach is to trace the light path from the exit pupil of the lens back through the film gate and to the light source; then to design appropriate optics that will funnel the light bundle along the same optical path and into the lens. A specially designed light source allows for the light path to be bent and a dichroic filter inserted so that only the light in the visible spectrum is reflected towards the lens and the heat is filtered out.

Would you like to learn more about the history of visual effects and milestone films such as Superman? You can with the new fxphd course VFX102: History of Visual Effects, taught by Matt Leonard.

The projector shutter is dispensable for front-projection because the camera shutter blanks out the image in between frames anyway. (I also find that this helps with balancing the lighting of the foreground element to the background image.) On Superman The Movie the publicity department needed a still of Superman flying; a stills camera was mounted in place of the film camera and, with the projector running at speed, a burst of shots were taken without any attempts at synchronization. As a result some of the shots had a vertical smear in the background which added to the illusion of speed. One of those shots became the poster shot for Superman.

As a result a small tungsten halogen lamp can be used to produce a brighter image and of the same color temperature as the lamps used to light the set, which in turn makes it easier to achieve a good color balance between the projected image and foreground element. It also made it possible to use a camera lens on the projector without the risk of damage. However projecting through a camera zoom lens with an anamorphic rear unit is a touch more difficult.

On the original Superman we used Cooke 5:1 zooms with Technovision anamorphics both on the projector and on the camera. On Superman II we used the newly developed f2.8 Cooke Super Cine Varotal 10:1 zoom (25-250mm) on the camera and the 8-perf Vista Vision format on the projector (Super Cine Varotal could be configured for 35mm or VV format). On some of the scenes we used VistaVision to VistaVision in order to generate a composite plate and then used that plate to composite another element as VV to 35mm anamorphic. (e.g. aerial fight scenes and chases through Metropolis.)

Another addition on Superman II was the Zoptic Flying Rig which was suspended from the ceiling and had a 360 degrees rotation in addition to pan and tilt and could be operated manually or remotely. (We also had a motion control computer control unit that could record and playback the Flying Rig moves but soon discovered its limitations for most live action shots). I designed this rig and had it built during the hiatus between the end of production on Superman and the “resumption” of shooting on Superman II (The major portion of Superman II had already been shot by Richard Donner back-to-back with the first movie except for visual effects).

Superman II had the most challenging flying effects of all 3 movies that I was involved with and also achieved the highest quality both in terms of image quality and flight agility.

For reasons known only to the production managers of this world Superman III was shot with 35mm on both projector and camera using Cooke Super Cine Varotal 10:1 zooms and the Flying Rig. I was acting only as a consultant on that one and had, by then, set up base at Paramount Studios in Hollywood with my second complete Zoptic Flying Rig.

fxg: Can you take us through a typical Zoptic shot from say Superman or Superman II – such as a scene of Superman flying past Metropolis buildings?

Perisic: Non chasing Superman down the street at night was one of those shots that required Vista-Vision to Vista-Vision compositing and then Vista-Vision to 35mm anamorphic. Because helicopters were not allowed, the background plates were shot from the back of a camera car. As Superman and Non were flying just above the street lights it was difficult to keep them in the clear area between the light flares in order to maintain the illusion that they were above and behind the lights.

I got an artist to rotoscope the lights in the original background plate and produce a hi-contrast plate which had only clear circles on a black background. A sync mark was made in the camera gate before each take and at the end of the take the film was rewound in the camera; the hi-contrast plate was loaded in the projector and projected on a clear area of the f.p. screen as a superimposition. A diffusion filter in front of the camera lens made the white blobs of the hi-contrast plate flare out to match the flares of the street lights. This of course could have been done later on an optical printer but that would have involved going through another generation.

Another example of this double-plate technique is Superman kicking Non. Superman flies in towards the camera from top left of frame, somersaults backwards and kicks Non who has flown into the frame from the right. A pole arm was placed through the front projection screen; at the end of the pole arm is a body mold attached by means of a mechanical joint allowing for a pitch and yaw movement. For this shot Chris was lying on his back with his costume covering the body mold which was yawed to a ¾ forward position. At the start of the shot the Zoptic Flying Rig is rotated 180 degrees so that the camera is effectively upside-down – this makes Superman look the right way up – and as the Zoptic unit rotated to normal position the body mold was yawed through 90 degrees so that the feet were pointing towards the camera at the completion of the roll.

On the day we did this shot we had rehearsed the move with Chris’s double and waited for him to be released from the main unit (something we had to do a lot of in general as Chris did not like to be doubled). When he came on the flying unit stage I showed him the video assist recording of the rehearsal and started to explain the process. Usually he would want to know every detail of the process but on this occasion he just shook his head saying: “Whoa! That’s way too complicated. Just shout when you want me to do the kick.”

Probably the most difficult shot involved five people in the air – when the 3 bad guys fly to the North Pole taking Lois Lane and Lex Luthor with them. It involved the use of 3 pole arms on a 90 foot wide curved screen. The pole arms were rotated clockwise and anticlockwise in unison – the Zoptic Flying Rig was also rotated in the same direction cancelling out the apparent rotation effect of the pole arms and so maintaining the appearance of a straight and level flight. As a result the flyers appear to move up and down relative to each other although they are attached to fixed poles.

fxg: What are some of the other camera, effects and other projects you have continued to work on and be involved with?

Perisic: I designed the Moving Stills Front-Projection unit which utilizes a large format still of the background image while only a relatively small area of that image is seen (projected) by the projection lens. The compound carrying the transparency (background image) is moved independently of the projection lens and linked to the gearhead supporting the unit so that when the camera pans and tilts across the f.p. screen, the appropriate area of the transparency is projected by an instant motion control unit. This unit was used on Sylvester Stallone’s Cliffhanger as well as on Gunbus/Sky Bandits and The Phoenix and the Magic Carpet.

I also developed Zoptic Blue/Green Screen front projection unit which allows for a narrow band of a specific color (Blue, Green etc.) to be projected so that a clean, uncontaminated color separation matte of the subject could be extracted. It was used on Batman Returns where Batman and Catwoman’s shiny black costumes presented a particular problem with Blue Screen wrap-around contamination.

And, of course, Z3D. A single camera, single lens, format independent, 3D system for use with both film and digital cameras with an easy to use convergence control and an optical or electronic 3D viewfinder. It can also be used for Front-Projection 3D!

fxg: What are your thoughts on the move from optical to digital effects?

Perisic: I look upon this as Evolution rather than Revolution and can see a real danger of “throwing the baby out with the bathwater” if one takes the “revolutionary” approach. It has made the optical printer obsolete but has given rise to the film scanner, digital Inter-negative etc. Green has largely taken precedence over the Blue for screen backing particularly with digital cameras…but it is still the same color separation process that enables the creation of a live action matte, regardless of how the elements are composited.

Well-made and well executed shots using models and miniatures are generally more convincing that the CG shots that look like CG shots. There seems to be a temptation to use CGI animation in live action scenes when it is not necessarily the best solution to a specific FX requirement. Like with any other VFX technique it might be good to remember that “just because one can do it – does not mean that one should do it.”

Front-projection has always been more of a niche technique unlike back-projection and blue screen color separation which were more widely used. The reason, I suspect, is that front-projection requires somewhat more sophisticated equipment in terms of optics and is in limited supply as these units are not mass produced. There is also a tendency to go for the color separation solution because the shots can then be farmed out to various VFX houses for digital compositing. Often the green/ or blue screen elements end up being shot before the backgrounds, which is a bit like putting the cart before the horse.

The use of digital cameras does not automatically preclude front-projection. The moving stills front-projection works perfectly well with any digital camera as there is no need for synchronization – but there is a need for the background plate to be shot first!

Standard front projection requires synchronization between the projector and camera, but this too is no longer limited to film cameras only. In fact projecting a film plate and photographing the composite image with a digital camera offers some interesting advantages in terms of resolution and color balance between the projected background and the foreground. The projected plate can also be originated on a digital camera or as a CG element and printed out to film for front-projection compositing. (We did a 7-Up commercial many years ago, when CGI was in its infancy, by compositing live action with CG animated background which had been printed out to film.)

However using a digital projector in place of a film projector for front-projection would require finding solutions to some major problems in connection with the projection lens and the lamp-house.

There are still only two ways of generating mattes for compositing of live action elements, (apart from “in camera” solutions such as mirror shots, split screens, rotoscoping and wire removal) :

a) Color separation (green screen, blue screen etc.)

b) Process-projection (back-projection, front-projection)

In terms of lighting the front-projection is more suitable for back-lit and cross-lit scenes whereas color separation approach is more suitable for flat, front-lit scenes. Interactive lighting is extremely important in compositing; it enables the DP to blend the foreground and background elements into one coherent image instead of two or more elements spliced together. With front-projection the DP has a complete control of the final image – what you see is what you get.

fxg: Can you give your impressions on say Man of Steel and its visual effects?

Perisic: Wall-to-wall CGI must have provided a lot of work. With the new costume for Superman it should have been easier to derive good color separation mattes and produce some effective flying shots. But, alas, the flying is reduced to a few “hovering” shots (wire removal) and a lot of zapping streaks accompanied by very loud bangs.

As for 3D – it was a missed opportunity. The 2D to 3D conversion can only produce a “pseudo” 3D at best with a limited depth illusion behind the screen, but at least it offers an opportunity for some of the CG elements to be re-rendered with a second viewpoint to create at least a few shots that have real depth and can on occasions come forward from the screen plane.

To think that here was a unique opportunity to have Superman fly through the screen and right over the heads of the audience! Such a shot would not need to be accompanied by a loud bang – but it would need to be shot in 3D!

Find out more about Perisic’s work at www.zoptic.com.